Contents

1. Introduction

2. The Four-Block People Analytics Model

3. Sources of People Data

4. How to create Robust People Data Sets with Strong Correlations

- People Metrics Definition Process

- The People Metrics Definition Workshop for Operational Managers

- Restricted Range, Babies & Bathwater

- Technical Reasons Why Data May Not Hang Together

5. Take Away

1. Introduction

Making sense of people data is a struggle for many HR professionals. People analytics is only effective when data collection is focused on achieving a particular management objective – such as improving talent management processes, such as recruitment or retention, or to demonstrate HR’s contribution to the value/ROI of these processes. Despite this core concept of people analytics, many companies simply analyse the data nearest to hand – with the results being anything but insightful. Ultimately ad hoc data analysis invariably ends in project failure – delivering only a wasted budget and a belief that people analytics is just hype.

As most technical analysts will tell you, people analytics project failure usually boils down to just one thing: it simply means that hardly any significant correlations could be found in the data.

This article will help you harness people analytics, and avoid project failure, by presenting a systematic, cost-effective methodology for creating robust data sets that correlate. We will be focussing on two tools: the People Metrics Definition Process and People Metrics Definition Workshop for Operational Managers.

2. The Four-Block People Analytics Model

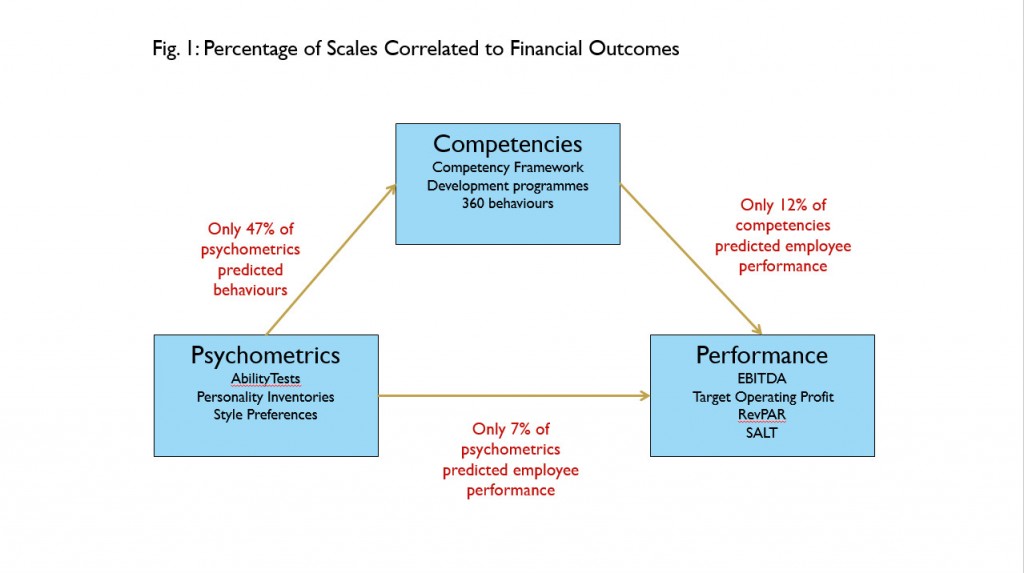

The People Metrics Definition Process methodology holds the premise that the primary – and perhaps only – reason for investing in people programmes – such as recruitment, development, succession planning, and compensation – is to deliver the workforce competencies required to drive the employee performance needed to achieve specific organisational objectives. Graphically, this can be expressed as follows:

| People Programmes |

→ |

Workforce Competencies |

→ |

Employee Performance |

→ |

Organisational Objectives |

If any link in this chain – the Four-Block People Analytics Model – is broken, it means that investments in people programs are not delivering the organisational objectives aimed for.

The strength of a link between any two blocks in the model is referred to as the statistical correlation. When two blocks are correlated, a change in the values of one block can be predicted from a change in the values of the other. Let’s put this into a real world example – a training programme improves employees’ competency scores, which in turn results in a predictable, corresponding increase in employee performance ratings. This would show that competencies and employee performance are correlated. However, where there is a poor correlation between competencies and employee performance, then training programmes which increase competency scores will not result in increased employee performance. From a business perspective, this means that the training spend was a wasted investment.

3. Sources of People Data

The ways that you obtain the data used in each block is essential for establishing correlations.

1. Data sources for organisational objectives

Organisational objectives data reflects the extent to which business objectives are being achieved. This data is often expressed in financial terms, although there is an increasing drive towards the inclusion of cultural and environmental measures. A common and critical pitfall to avoid here is to consider workforce objectives (such as retention or engagement), rather than organisational objectives.

2. Data sources for employee Performance

Employee performance data is typically generated by managers in the form of a multidimensional ratings obtained during performance reviews. An employee performance rating should simply reflect the employee’s potential to contribute to organisational objectives. Note that the term potential is used deliberately to emphasise that employees who do not fully contribute to organisational objectives today, may do so in the future if they are properly trained and developed (assuming that they can be retained). A common error here is confusing employee performance measures with competency measures, which we define next.

3. Data sources for Competency

Competencies are observable employee behaviours hypothesized to drive the performance required to deliver organisational objectives. The word “hypothesized” is used to emphasise that the only way of knowing whether the company is investing in the right competencies is to measure their correlation with employee performance. If the correlation is low, it would be reasonable to assume that the company is working with the wrong competencies (or that there is a problem with performance ratings).

There are three problems usually associated with competency data. First, competency ratings are often based on generic organisation-wide competency frameworks. The resulting competencies are therefore typically so general as to be useless for any specific role.

Second, competency frameworks are often created by external consultancies lacking full insight into the real competencies required to drive employee performance in a particular sector and organisational culture. The only way to create robust competency frameworks is to obtain them from the operational personnel to whom the competencies apply. The People Metrics Definition Workshop for Operational Managers (which we explore later) will achieve this.

Finally, many companies confuse employee competencies with employee performance – presenting competencies as part of an employee’s performance rating. As noted above, competencies are merely hypothesized predictors of employee performance. They are not stand alone measures of employee performance. A real word example would be where good communication competency helps a salesperson to sell more, however a high communication competency score will not make up for missing a sales target.

4. Data sources for People Programmes

Programme data usually reflects the efficiency (as opposed to effectiveness) of talent management programmes such as the length of time it takes to fill a job role, the cost of delivering a training program, and so on. Programme data is usually sourced via the owner of the relevant people process.

For further ideas about people data measurement, visit www.valuingyourtalent.com

4. How to create robust people data sets with strong correlations

Here are four remedies for creating a Four-Block People Analytics model that actually correlates:

1. People Metrics Definition Process

The most common reason for poor correlations is using data not specifically generated with a defined purpose in mind. This is like trying to cook a sticky-rice stuffed duck without buying rice or a duck – it’s simply not going to end well.

The best way to cook up a successful people analytics project is to use a People Metrics Definition Process. This starts with the end in mind (namely by first defining the business objectives data) and then working backwards through the Four-Block People Analytics Model:

1. Organisational objective

First ask: “What organisational objective(s) need to be addressed?” Where possible, choose high profile objectives such as those which appear in the annual report (such as revenues, costs, productivity, environmental impact, and so on). Then narrow down this list to those metrics which, for example, reflect targets that are being missed. This approach ensures your people analytics has relevance.

2. Employee performance

Now consider how the employee performance required to achieve these objectives will be measured. This is discussed under section three – Restricted Range, Babies and Bathwater.

3. Competencies

Next define the competencies likely to be needed to drive this employee performance. Note that global competencies usually exhibit far lower correlations than role-specific competencies. Competency definition is discussed below, under the heading The People Metrics Definition Workshop for Operational Managers.

4. People Programmes

Finally consider the kinds of people programmes that will be required to deliver these competencies and also how to measure the efficiency of these programmes. Bear in mind that a people programme is only as effective as the competency metrics that it produces.

Companies performing the above steps in any other order should not be surprised if they end up with poor correlations between their people programmes, competencies, employee performance and organisational objectives.

2. The People Metrics Definition Workshop for Operational Managers: Avoiding the Talent Management Lottery

Probably the second most common reason for poor correlations in the Four-Block People Analytics model is the use of inconsistent employee performance data. Inconsistent performance data is usually the result of managers not knowing what good performance looks like in their teams. This means that the company lacks an analytical basis for distinguishing between its high and low performers which turns the allocation of development, compensation and succession expenditures into a talent lottery rather than an analytically-based process.

By far the best (and easiest) way of transforming a performance management lottery into an analytical programme is the People Metrics Definition Workshop for Operational Managers.

This is simply a facilitated meeting for operational managers, where operational managers are guided through the People Metrics Definition Process. The key deliverables are:

- A set of people programme, competency, employee performance and organisational objectives metrics

- Increased engagement between operational managers and the data that they will be using to manage their teams. You simply cannot get operational managers to engage with HR processes and data if the model’s metrics definitions are provided by non-operational parties, such as external consultants or HR. Even if these external metrics are of high quality, operational managers will still tend to treat them as tick-box exercises because they do not believe (probably correctly) that non-operational parties can truly understand the business and its culture as well as they do.

The role of HR and/or external facilitators in the People Metrics Definition workshop is therefore not to provide content but to expertly facilitate the gathering of people metrics and helping managers reach a consensus.

When it comes down to the crunch, this workshop ultimately has an enormously positive impact on correlations within the Four-Block People Analytics model.

3. Restricted Range, Babies and Bathwater

Another problem that comes from not properly distinguishing between high and low performing employees is known as Restricted Range. Restricted range means that team member performance ratings tend to be clustered around the middle rather than using the full performance rating range. For example, the graph below shows a typical team performance distribution of a company using a 1 (poor performance) to 6 (high performance) rating scale. Note the number of ratings clustered around 4 and 5 instead of using the full 1 – 6 range:

There are many possible reasons for restricted range. Sometimes it’s because managers simply do not know what good performance looks like as discussed above. Another common cause is that in order to maintain team engagement and unity, they avoid low scores; on the flip side, they may avoid high scores so as to avoid feelings of favouritism.

Restricted range carries two serious implications:

- Restricted range not only restricts employee performance ratings, by definition, it also seriously restricts the possibility of decent Four-Block People Analytics Model correlations

- If everyone in a team has similar ratings, then managers must be using some other basis – some other scale even – for making promotions and salary decisions. Secret scales cannot be good for team morale or guiding employee development, compensation and succession planning investments.

Addressing restricted range is usually a cultural issue with causes that must be carefully understood before attempting intervention. One remedy usually involves explaining to managers that more differentiation between their high and low performers will result in the right team members getting the right development which in turn will result in higher team performance for the manager.

Perhaps this is the time to raise the thorny topic of employee ranking – such is the bad press of this, that employee rating/ranking is supposedly no longer used at companies such as Microsoft, GE and the Big 4 consultancies.

A little reflection reveals that avoiding employee performance ranking is a case of “throwing the baby out with the bathwater”, because if employees are really no longer measured, then on what basis are promotions, salary increases and development investments made? Presumably it means that the “real” employee performance measures have been pushed underground into secret management meetings and agendas where favouritism and discrimination cannot be detected. This cannot be good for employer brand.

If additional proof is required that employee ranking will always exist, consider what would happen in the event of a serious downturn and these companies were forced to lay off employees as was recently the case with Yahoo! If employees are not ranked in some way, then any layoffs would appear to be random and are a return to the talent management lottery scenario.

It must therefore be reasonable to assume that no matter what a company’s public relations department says, employee performance ranking still exists everywhere even if it has been temporarily pushed underground in the past few years. If even more proof is required, confidentially ask an operational manager, with whom you have a trusted relationship, who in their team has the greatest and least performance potential. Next ask them whose performance potential lies somewhere near the middle. What you’re doing here is in fact is getting the manager to articulate the “secret” scale that they use for salary increases and promotions.

An important role of people analytics professionals – and HR in general – is to contribute positively to an organisational culture where such scales are part of the analytics mainstream and not hidden in secret meetings. This ranking approach can be extended – and is indeed already used by many companies – by asking multiple managers to rank the employees and/or using a team 360.

The above ranking process will also prove useful to companies wishing to avoid legal accusations of random dismissal and layoff; Yahoo! for example would have benefited from this approach.

4. Technical reasons why data may not hang together

Finally, there are some technical statistical reasons why the Four-Block People Analytics Model data may not correlate, such as:

- The data set may not be large enough (e.g. you need a lot of data for proper analysis)

- If you’re using non-machine learning/parametric techniques, the data may not be sufficiently normally distributed and/or may not be linear. This is another good reason for companies to consider migrating to the use of machine learning techniques.

5. Take Away

Poor correlations in the Four-Block People Analytics Model are a stark reminder that people analytics data needs to be collected with specific business objective outcomes in mind. Using any other form of data ultimately results only in wasted time and resources. This approach must be one that involves operational managers – who are each critical to the defining of metrics to be used. Only then can people analytics truly deliver on all that it promises.

Please do share your comments, thoughts, successes and failures with me below or at  .

.

©Blumberg Partnership 2016

With thanks to Tracey Smith of Numerical Insights who kindly reviewed this article.